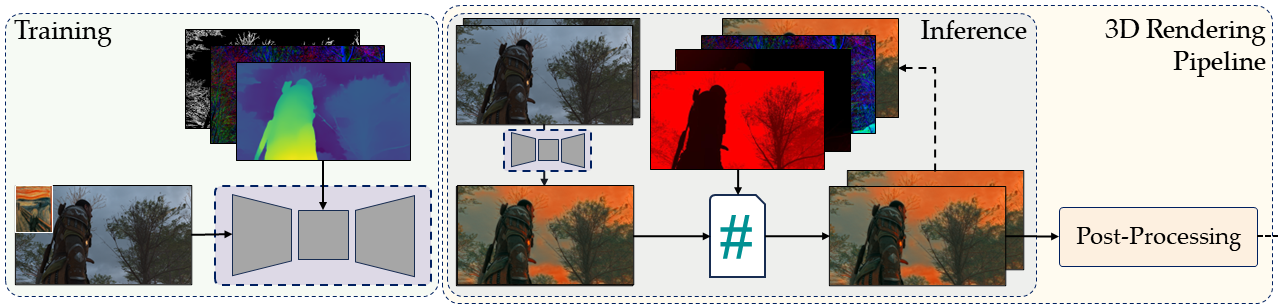

Artistic Neural Style Transfer (NST) has achieved remarkable success for images. However, this is not the case for dynamic 3D environments, such as computer games, where temporal coherence remains a challenge. Our paper presents an approach that uses the G-buffer information available in a game pipeline to generate robust and temporally consistent in-game artistic stylizations based on a style reference image. We use a synthetic dataset created from open-source computer games and demonstrate that the utilization of depth, normals, and edge information enables the stylization process to be more aware of the geometric and semantic aspects of a game scene. The proposed approach builds on previous work by injecting style transfer in the rendering pipeline, while also utilizing G-buffer information during inference time to improve upon the stability of the stylizations, offering a controllable way to stylize computer games in terms of temporal coherence and content preservation. Qualitative and quantitative evaluations of our in-game stylization network demonstrate significantly higher temporal stability compared to existing style transfer approaches when stylizing 3D computer games.

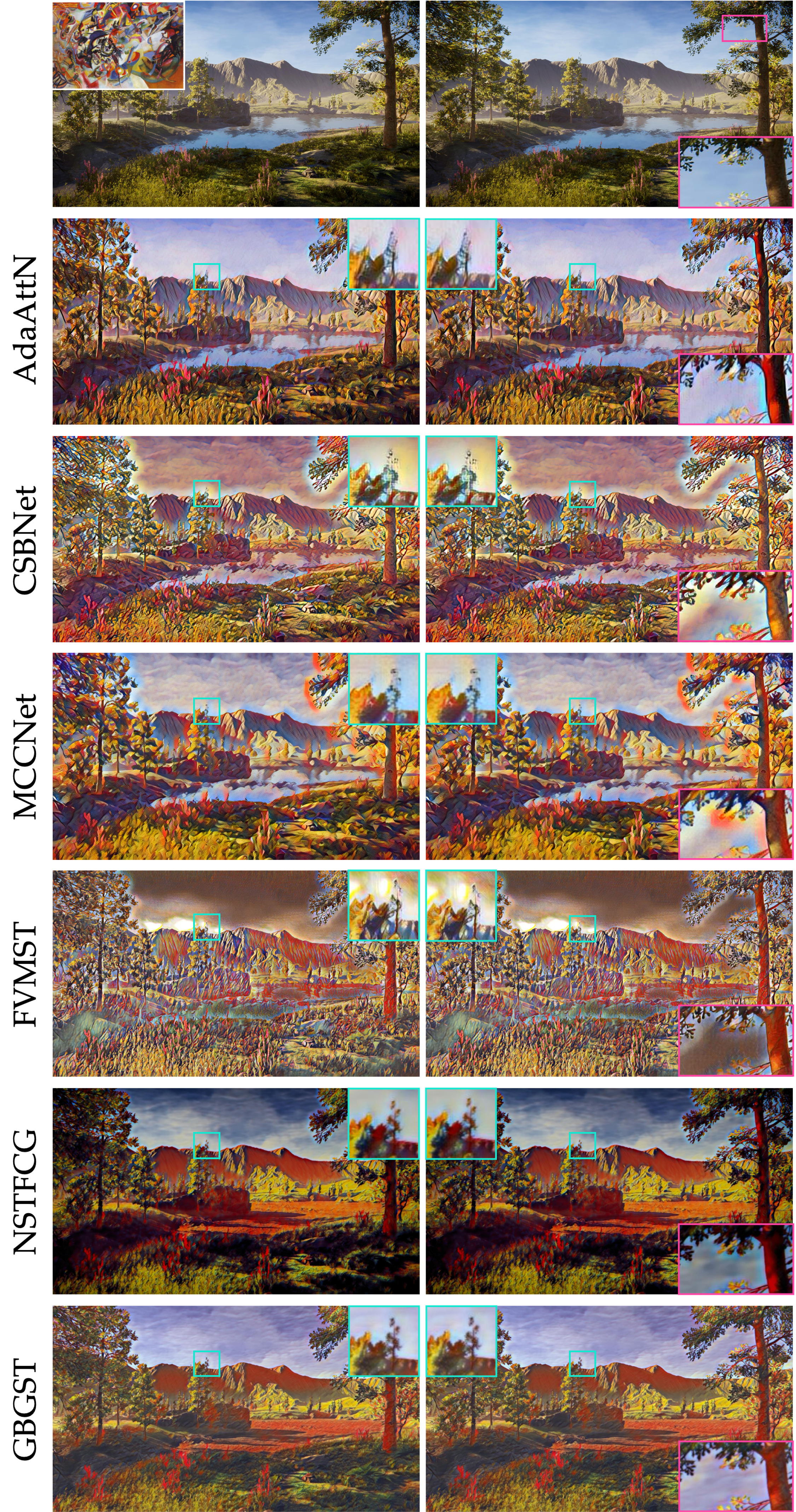

Comparison of the results of our method with the state-of-the-art methods.

Our Results

Comparisons against state-of-the-art methods

The results shown here are of compressed video quality. For the higher quality results, please refer to the Google Drive link.